A unit block that I worked on was interesting. It is not memoryless, and by certain optimization one could precompute part of the next output. Therefore, this system can give a correct output almost immediately, only after taking account the current input, although the whole processing steps will still finish some time later.

At first I didn't really pay attention to this. I thought, it was just a waste. When the computing process is not really finished anyway, I still can't trigger the system for next operation, although I get its output already.

It was not until I had to form a bigger system by cascading these blocks, then I realized how it saved my day. When three such blocks are cascading, the second one needs to wait for the output of the first, and so does the last one. By giving the output as soon as possible for the next stage, the total time needed to undertake the cascading operation is of course shortened!

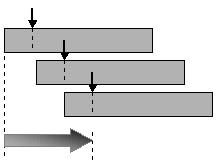

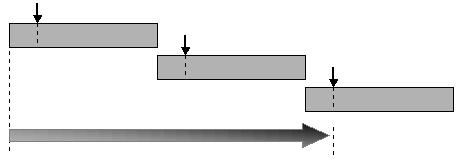

The above graph shows the idea. Just assume x-axis represents time and it should be really clear. The small arrow denotes the time where the output of a gray block is ready to be fetched and which (in this configuration) will be fed to the next block. The big arrow show how long it takes until the last stage's output is available. It is faster compared to the case where you just give the output of a block at the end of its processing:

Optimization rocks!

No comments:

Post a Comment